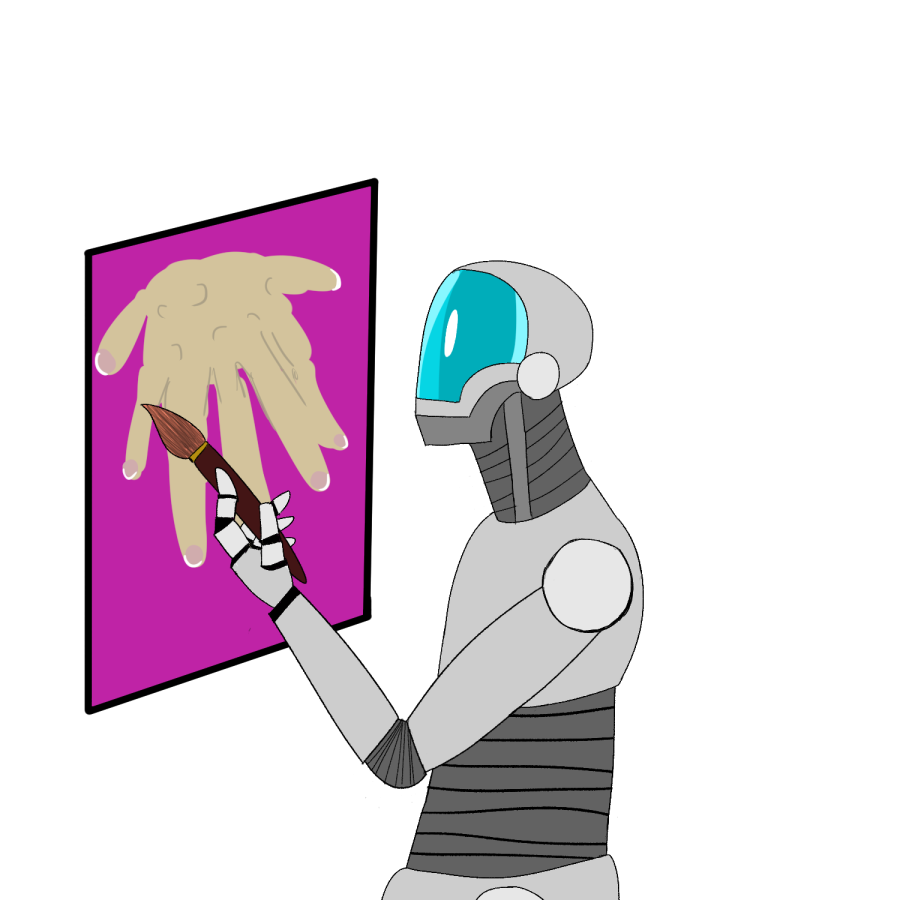

Opinion: The future filled with AI’s work

A look into the ethics of the artwork and deepfakes being created online, thanks to AI

Artificial Intelligence (AI) is a new technology that has rapidly advanced in recent years. While ChatGPT, an AI program that writes text based on prompts inputted by the user, has been foregrounded in this discussion, deepfakes of people both visually and aurally have also created ethical concerns.

Deepfakes are AI-generated sounds or images meant to appear as realistic sights or sounds. One of the more common examples of this is the practice of using AI to map someone’s face onto another person. For example, AI could turn a video of a random person dancing into a video of Tom Cruise dancing by placing Cruise’s face onto the existing face in the first video.

Though this technology yields many ethical and political concerns, tech industry leaders dismiss these concerns with the argument that the generated content appears unrealistic and obviously fake. While this stance may be true for certain people, not everyone can tell the difference between AI-generated content and real content. Furthermore, as technology evolves, this excuse will become less and less valid as the content becomes more and more realistic.

One of the many fears surrounding this technology is the political implications of its use. Users can utilize this technology to make a famous figure, such as a politician, say anything the user wants them to. For one, TikTok is riddled with videos of “U.S. Presidents,” most commonly Obama, Trump and Biden, live-streaming their gameplay of Minecraft or other video games. These videos, made thanks to AI voice deepfakes, showcase how people can make these famous figures say anything they want in a realistic manner. While this does have some comic value, it could also lead to major worldwide issues should someone use it to spread fake news about a politician’s stance on a particular issue or the status of their health.

In fact, after Ali Bongo, the president of Gabon, suffered a stroke in fall 2018, the official video of his first speech after the stroke was suspected by some observers to be a deepfake, leading to a coup attempt against him by military officials who believed his health was still too poor to run the country.

Using someone’s appearance online without their knowledge raises concerns that go beyond politics as well. Deepfakes can also be used to create sexual content without the consent of the person rendered in the video, often targeting celebrities as well as children. As this aspect of the technology comes into play, the issue goes beyond solely ethical issues and delves into the area of legal issues.

This use of the technology is problematic and hard to control, since the generated content spreads so quickly. Such content spreads beyond and through borders, and as it crosses borders the laws change surrounding it. So, content originating in America could be banned in America but allowed in Germany. Even with these concerns, laws surrounding the use and regulation of this technology specifically are few and far between since AI produced deepfakes are so new and so quickly developing.

As of right now, it still requires a lot of technical knowledge to create quality deepfakes, so politicians are less quick to regulate the content on account of the fact that not many people are skilled enough to create the content. However, lawmakers are still facing pressure from victims of the technology as well as lobbying organizations to create new laws since, as the technology develops and becomes more accessible to everyday people, it is more likely to be abused by said users.

There’s still hope for regulation in the future, as many countries are already working together to regulate criminal social media content such as posts surrounding human trafficking. Still, due to safe harbor laws which protect social media sites from being sued over the content posted on their sites, social media companies have little internal motivation to regulate this content. Social media companies generally prefer to limit as little content as possible to keep engagement high. However, they will regulate content if laws apply to the content, such as someone online posting about where and when to buy illegal substances. So, until laws are made specifically calling out problematic deepfakes, regulation is unlikely to occur.

Due to the newness of this technology, there are many questions surrounding this technology and its future. Usage of this technology in the future has the potential to be extremely harmful should the technology go unregulated.

Hello, I am a junior Communication and Cinema Studies double major with a Geosciences minor. I am the Website and Newsletter Editor at the Trinitonian,...